Call Us At 604-399-8066

AWS Launches Massive AI Compute Cluster

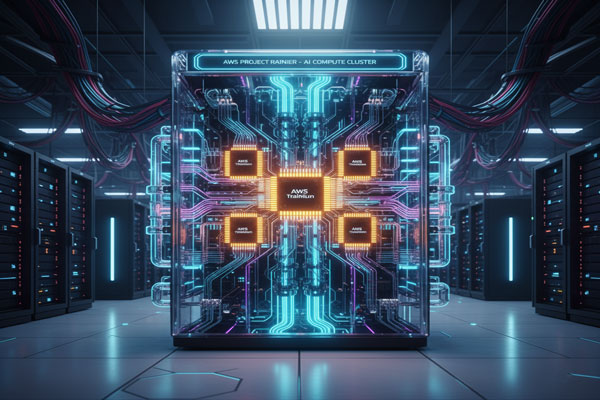

AWS has officially activated Project Rainier, unveiling one of the largest AI compute clusters in the world. This innovative infrastructure integrates nearly 500,000 Trainium2 chips, with plans for partner Anthropic to scale to over 1 million chips by the end of 2025.

Project Rainier, operational less than a year after its announcement, is already powering Anthropic’s AI model, Claude, enabling advanced training and inference. The cluster provides over five times the compute capacity Anthropic previously used, marking a significant step forward in AI infrastructure.

Named after the iconic Mount Rainier visible from Seattle, the project spans multiple U.S. data centers, creating an unparalleled scale in cloud computing. According to Ron Diamant, AWS distinguished engineer and head architect of Trainium, “Project Rainier is one of our most ambitious infrastructure projects, paving the way for next-generation AI models.”

At the heart of Project Rainier are Trainium2 chips, custom-built for AI workloads. Unlike standard processors, these chips handle enormous amounts of data at incredible speed. For perspective, a single Trainium2 chip can perform trillions of calculations per second—tasks that would take humans tens of thousands of years to complete.

Project Rainier combines UltraServers, each holding 64 Trainium2 chips with high-speed NeuronLink connections, into massive UltraClusters. This architecture ensures rapid communication between chips, both within servers and across data centers using Elastic Fabric Adapter (EFA) technology, optimizing performance at every level.

AWS designs its own hardware and software, giving full control over every layer of the system. This vertical integration allows the company to troubleshoot, optimize, and innovate quickly, ensuring high reliability for such a vast AI compute cluster.

AWS continues its commitment to renewable energy and resource efficiency. All its operations are matched by 100% renewable energy, and Project Rainier’s data centers incorporate energy-efficient cooling and water-saving designs. For instance, some sites rely on outside air for most cooling needs, significantly reducing water use compared to industry standards.

Project Rainier doesn’t just enhance Claude—it establishes a blueprint for AI at scale, enabling breakthroughs across medicine, climate science, and other complex fields. Like its namesake peak, Project Rainier stands as a landmark achievement, redefining the possibilities of AI computing—chip by chip.

Source: Amazon | October 29, 2025

Read more: https://www.aboutamazon.com/news/aws/aws-project-rainier-ai-trainium-chips-compute-cluster

Recent Comments

FAQ:

Q1: What is Project Rainier and why is it important?

A1: Project Rainier is one of the world’s largest AI compute clusters, launched by AWS. It uses hundreds of thousands of Trainium2 chips to power advanced AI models like Claude, enabling faster and more accurate AI training and inference.

Q2: What are Trainium2 chips used for?

A2:Trainium2 chips are custom-built for AI workloads, capable of performing trillions of calculations per second. They accelerate the training of complex AI models, making them smarter and more efficient than traditional computing hardware.

Q3: How does Project Rainier operate across AWS data centers?

A3: The project uses UltraServers and UltraClusters to enable high-speed communication between chips and servers via NeuronLink and Elastic Fabric Adapter (EFA) technologies, ensuring maximum performance and scalability across multiple data centers.

Q4: How does AWS ensure sustainability and energy efficiency in Project Rainier?

A4: AWS leverages 100% renewable energy, advanced cooling designs, and water-saving techniques to reduce energy consumption and environmental impact, making Project Rainier one of the most sustainable AI infrastructure projects.

Let’s Build Your Future Together

Take the Next Step Today

and Explore How Northinex Can Elevate Your IT

with Cutting-Edge Technology Tailored for Your Business